The phrase "AI persona" describes at least three distinct things: AI-generated persona documents, conversational agents built on general training data, and AI systems grounded in specific primary research. These are not the same product, and they are not appropriate for the same uses.

Where traditional personas came from

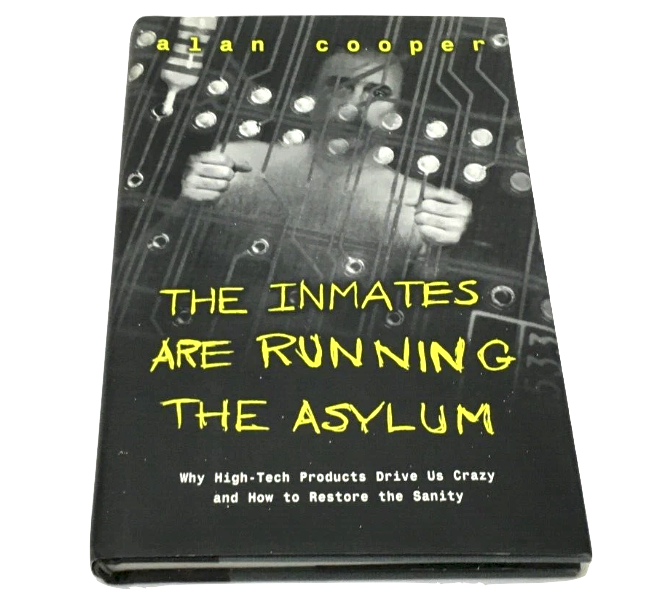

The user persona was developed as a design tool in the 1990s, most closely associated with Alan Cooper's goal-directed design methodology, described in his 1998 book The Inmates Are Running the Asylum.[1] Cooper's original intent was disciplined: rather than designing for a generic "user," teams would define specific, representative archetypes drawn from field research with real participants, capturing goals, behaviours and contexts that could anchor design decisions across a project.

Cooper's method required substantial primary research: interviews, observation and pattern analysis conducted directly with intended users before a single design decision was made. The resulting personas were meant to represent the primary goal of each distinct user group, not their demographic characteristics. This methodological rigour is often lost in how personas are used today.

In practice, personas evolved away from that disciplined origin. Many teams now create them quickly, from limited data, or from assumption and generalisation dressed up as research. The output is typically a document: a name, a photo, demographic information, a set of attributed needs and frustrations, some behavioural patterns. This document gets shared in a kick-off meeting and then, almost invariably, gets filed.

The fundamental limitation of a traditional persona is that it is static. It cannot be questioned. It cannot respond to a new design direction. It cannot tell you how a specific audience segment would react to a particular campaign message, a pricing change, or a new product feature. It represents what the research team believed to be true at the time of writing, but it cannot interrogate that belief against the underlying data, and it cannot evolve as the project evolves.

What AI changed, and what it didn't

AI made it possible to generate persona documents faster. Several tools now take a brief description of a target segment and produce a fully formatted persona in seconds, complete with name, backstory, motivations and pain points. This is faster than doing it manually, but it has the same fundamental problem as its predecessor: the output is based on general training data, not on the specific primary research your organisation conducted about your specific audience.

A persona generated from general AI training data reflects what large language models have learned from text on the internet about people who might fit a given demographic or psychographic profile. It is, in a meaningful sense, a statistical average of how such people have been described publicly. This may be useful for rapid prototyping, early-stage ideation or workshop facilitation. It is not a reliable basis for consequential decisions about real audiences, because the "persona" does not reflect your research. It reflects a generalised model of what someone like that might be like.

The risk is subtle: AI-generated personas look credible. They are well-structured, plausible and easy to share. Teams that have not examined their epistemics carefully may treat them with the same confidence as personas drawn from real fieldwork. That conflation is where decisions start to drift from actual audience evidence.

What a research-grounded AI persona actually is

A research-grounded AI persona (a Dynamic Persona, in Persona Dynamics' framing) is built differently. Instead of generating responses from general training data, it retrieves and synthesises from a specific corpus: the research documents, interview transcripts, survey data, focus group analyses and other primary evidence that your organisation has gathered about your audience.

The mechanism is retrieval-augmented generation (RAG)[2]: when a user asks the persona a question, the system searches the knowledge base for the most relevant evidence, then generates a response grounded in what those sources actually say. Every response carries a confidence score (a measure of how much of the response is supported by retrieved evidence) and shows the specific source passages it drew on.

This matters in two directions. When evidence coverage is strong, the confidence score validates the response: the team can see that the persona's position reflects substantial grounding in the research, not inference or extrapolation. When coverage is weak (when the question touches territory the research does not address), the confidence score flags this, preventing the team from over-relying on a response that is effectively the model guessing. The grounding score is a direct, visible measure of how much the output is rooted in real human evidence.

A Dynamic Persona is only as good as the primary research it is built on. Strong evidence produces grounded, trustworthy responses. Thin, assumption-led or poorly-sampled data produces outputs that reproduce those limitations faithfully. The platform's confidence scores make this visible: low scores signal where the evidence base needs strengthening, not where the tool is failing. In a Northumbria University deployment study,[3] participants reported that visible grounding scores helped them calibrate trust and assess where their research had gaps.

What this means in practice

The practical difference shows up most clearly in workflow. A traditional persona sits in a slide deck. An AI persona built from your research can be interrogated throughout a project: tested against new creative directions, asked about specific product features, questioned on pricing sensitivity, consulted when a new stakeholder brief arrives mid-project.

Because it draws on your evidence base, it reflects what your research actually found, not a generalised model of what "consumers like this" are assumed to think. When your research found that a specific segment is unusually sensitive to sustainability messaging but unconvinced by abstract claims, the persona reflects that finding. When a new campaign direction is tested against it, the response is grounded in that specific finding, not in a generic model of environmentally-conscious consumers.

This also changes what happens to research investment. Traditional personas convert research into a document that then gets filed. Dynamic Personas keep the research active and queryable throughout the project lifecycle, and beyond it. Personas persist across projects, building accumulated knowledge rather than requiring each project to start from scratch. See our article on why research gets filed after the brief for a fuller treatment of this problem.

Where the distinction matters most

For low-stakes exploration (early-stage ideation, rapid concept generation, generating hypotheses to test), the difference between a general AI persona and a research-grounded one may be minimal. General AI personas can be useful stimuli, even if their validity is limited.

The distinction becomes critical in four situations:

-

When decisions are consequential. Budget allocations, product launches, campaign commitments: decisions with real costs if wrong require research that reflects your actual audience, not a generalised model.

-

When audiences are specific or specialist. General AI training data skews toward mainstream, publicly-documented demographics. Niche audiences, specialist professional groups or specific regional populations are systematically underrepresented in any model trained on public internet text.

-

When you need to account for your decisions. A stakeholder asking where a recommendation came from needs a traceable answer. "The AI thought so" is not accountable. "Our Q3 audience study found this, and the persona response drew on sections 3, 5 and 7 of that research" is. This matters especially in public sector, regulated and client-accountable contexts.

-

When you have existing proprietary research. If your organisation has invested in original research (qualitative, quantitative or mixed), using a general AI persona ignores that investment entirely. A research-grounded persona makes it work harder, across more decisions, for longer.

What AI personas are not: the primacy of primary research

It is worth being direct about limitations, because AI persona tools are sometimes oversold. No AI persona, however well-grounded, is a substitute for direct engagement with real people. There are things that only primary research can provide:

- Information that was never captured in prior research

- The interpretive, contextual judgement of experienced qualitative researchers

- Behavioural evidence from conditions the original research did not cover

- Genuinely novel audience insight, rather than better access to existing knowledge

The role of Dynamic Personas is to make primary research more usable, not to replace it. When a confidence score is low, the appropriate response is not to distrust the tool but to recognise that the underlying evidence base needs expanding. The tool makes the gap visible. Primary research fills it.

What a well-built research-grounded AI persona provides is the findings from primary research, made available to the whole team, throughout the project, in a form that can be interrogated and challenged, with the evidence always visible. That is a meaningful improvement over filing research after the brief. But it is a different claim to replacing the researchers who conducted it and the participants who informed it. For more on how Dynamic Personas compare to synthetic participant tools, see our comparison article.

Summary: three types of AI persona compared

- [1] Cooper, A. (1998). The Inmates Are Running the Asylum: Why High-Tech Products Drive Us Crazy and How to Restore the Sanity. Sams Publishing. Available on Amazon. See also Cooper's own first-person account of the methodology's origins: The Long Road to Inventing Design Personas, Medium, 2020.

- [2] Lewis, P., Perez, E., Piktus, A., et al. (2020). Retrieval-Augmented Generation for Knowledge-Intensive NLP Tasks. Advances in Neural Information Processing Systems (NeurIPS), 33. arXiv:2005.11401. The foundational paper introducing the RAG architecture used in research-grounded persona systems.

- [3] Persona Dynamics / Northumbria University deployment study (2025). Four-week live deployment with MA Communication Design students and Citizen-Centred AI PhD researchers, in partnership with a local authority client. Full methodology and findings summarised in our Science & Validation page.